Systems Engineering

The main driver for the creation of the Systems Engineering group has been the necessity to ensure that the SAR system concepts developed in the institute have been properly understood and implemented in the ground segment of radar satellite missions. The focus of the group is therefore set on systems engineering for ground segment systems relevant to satellite SAR sensors. We define what is needed on-ground in order to support and drive the SAR mission execution; we implement these new systems and then operate them once the mission has been launched.

Over the last years we have witnessed firsthand the steady increase in complexity of the radar satellite concepts and thus also of the projects set up to realize them. The ground segments of satellite missions have turned into big and heterogeneous systems of systems that are built up by the joint effort of various engineering disciplines. This has brought us to the necessity to focus/gain knowledge in the following areas of expertise.

Areas of Expertise

Systems Engineering and Project Management

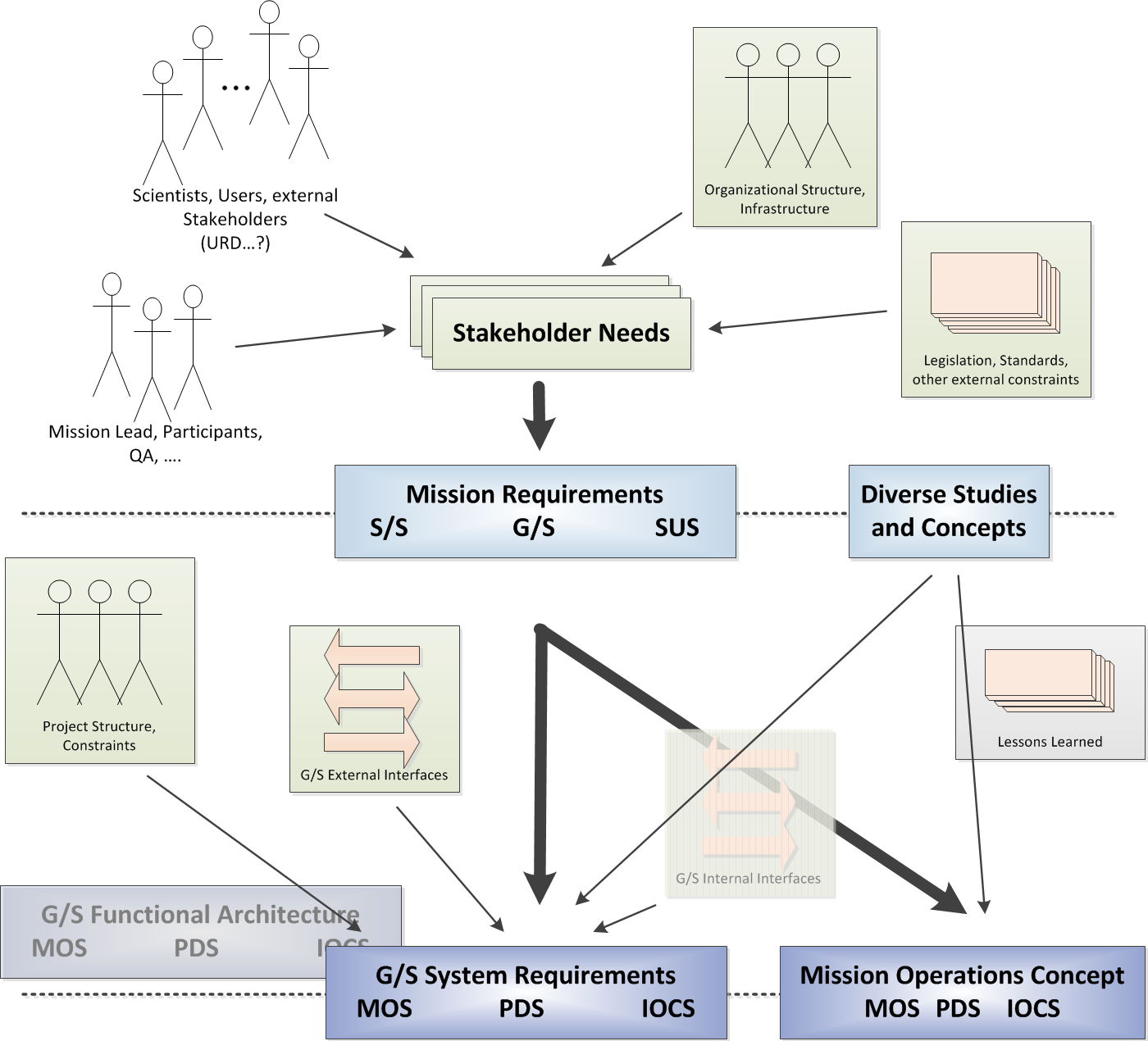

Our group implements systems engineering processes in the life cycle of ground segment systems relevant to satellite SAR sensors. Thus, we are involved from the early stages of a satellite project, i.e. feasibility studies in Phase A (ECSS definition), up to operations and disposal (Phases E and F respectively). Along this long journey we work closely together with many different teams from DLR and from our industry partners. By performing various analyses we can identify the system boundaries and also other systems, users, operators, etc. that has a stake in our systems. Through interaction with these stakeholders we can understand their needs and transform them into requirements that have to be included in the system design.

The next major step is the lay out of the system architecture and the specification of its internal and external interfaces. Meanwhile we also plan and then actively participate in the system integration, verification and validation activities. The generation and execution of concepts of operations, maintenance and system extendibility allow us to better understand and prepare the system for its utilization. Apart of assuming technical responsibility for the development of ground segment systems, our group is commissioned with the management of projects as well. This includes tasks such as the supervision of the project execution, decision making, performing trade-off analysis, etc.

Software Engineering / Software Development

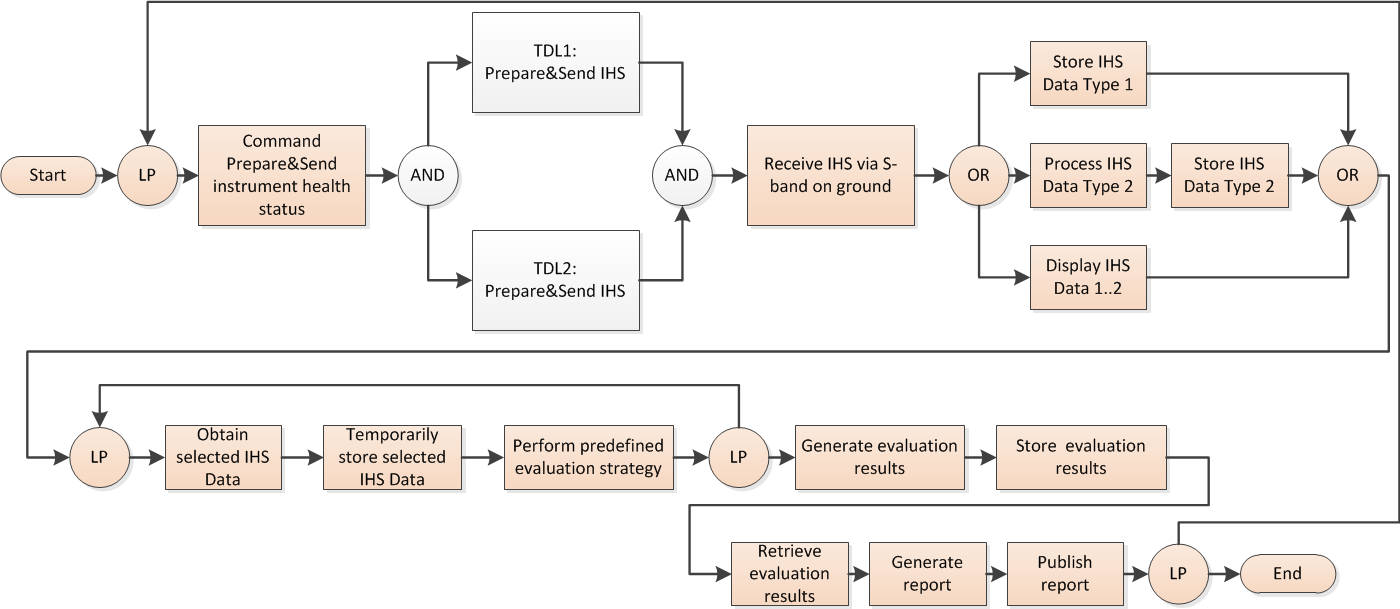

Software systems constitute a considerable part of a satellite ground segment. Many of them function without human interaction and must have very high level of reliability. Although each software implementation has its unique functions, in the end its building blocks have similarities with other software within the same project and across different projects as well. That is why we put effort into the improvement of software development efficiency by defining the design and maintenance process and by looking into the automation of module testing procedures.

We have gained expertise in the development of software solutions for autonomous SAR instrument and data take commanding, SAR instrument and SAR product verification as well as diverse tools for the simulation of external to us ground and space segment functionalities, such as mission planning or satellite synchronization. Besides that, we provide assistance for software projects within our institute, for example such that are based on big data analysis or for performance-optimized calculations, and we carry out the implementation of some of their core parts as well.

SAR Payload Operations

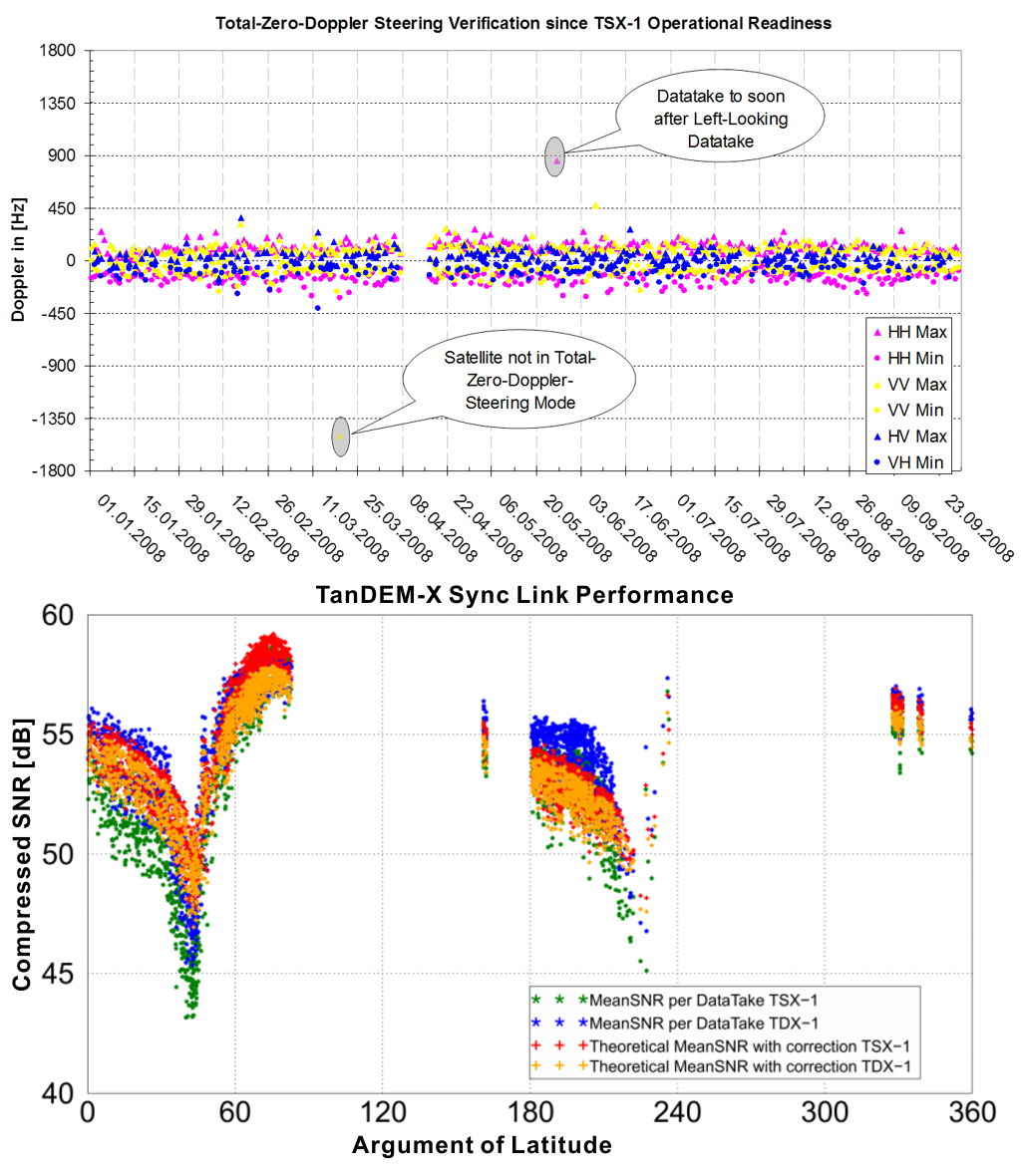

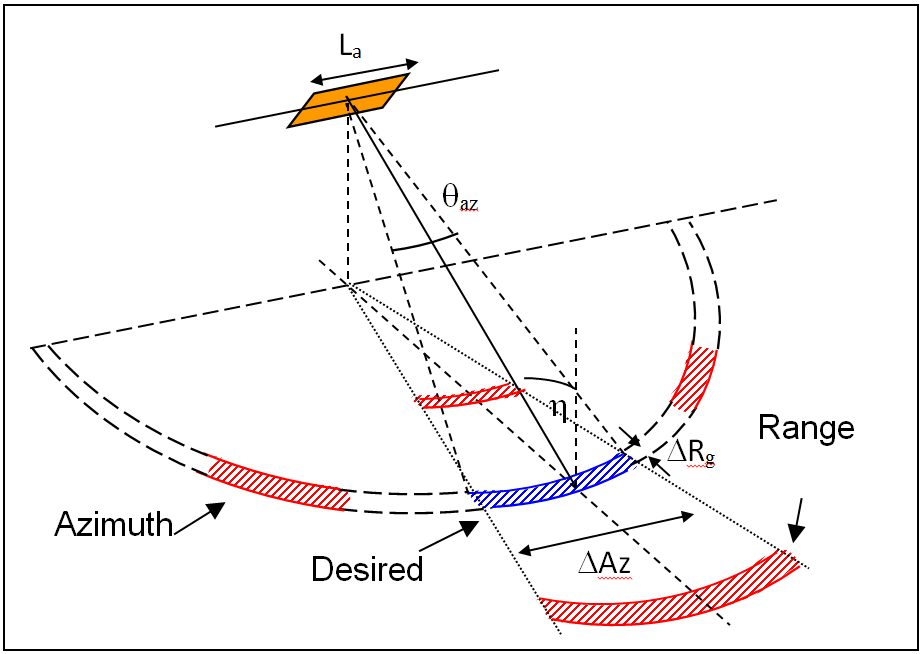

One very important task is the early involvement in the development of the SAR instrument. That allows us on the one hand to gain extensive knowledge of the instrument design, which knowledge is subsequently transferred to the development of the ground segment. On the other hand we are able to contribute with our expertise to the specification of the instrument acquisition modes and operations. We follow closely and participate in the instrument test and characterization program as well.

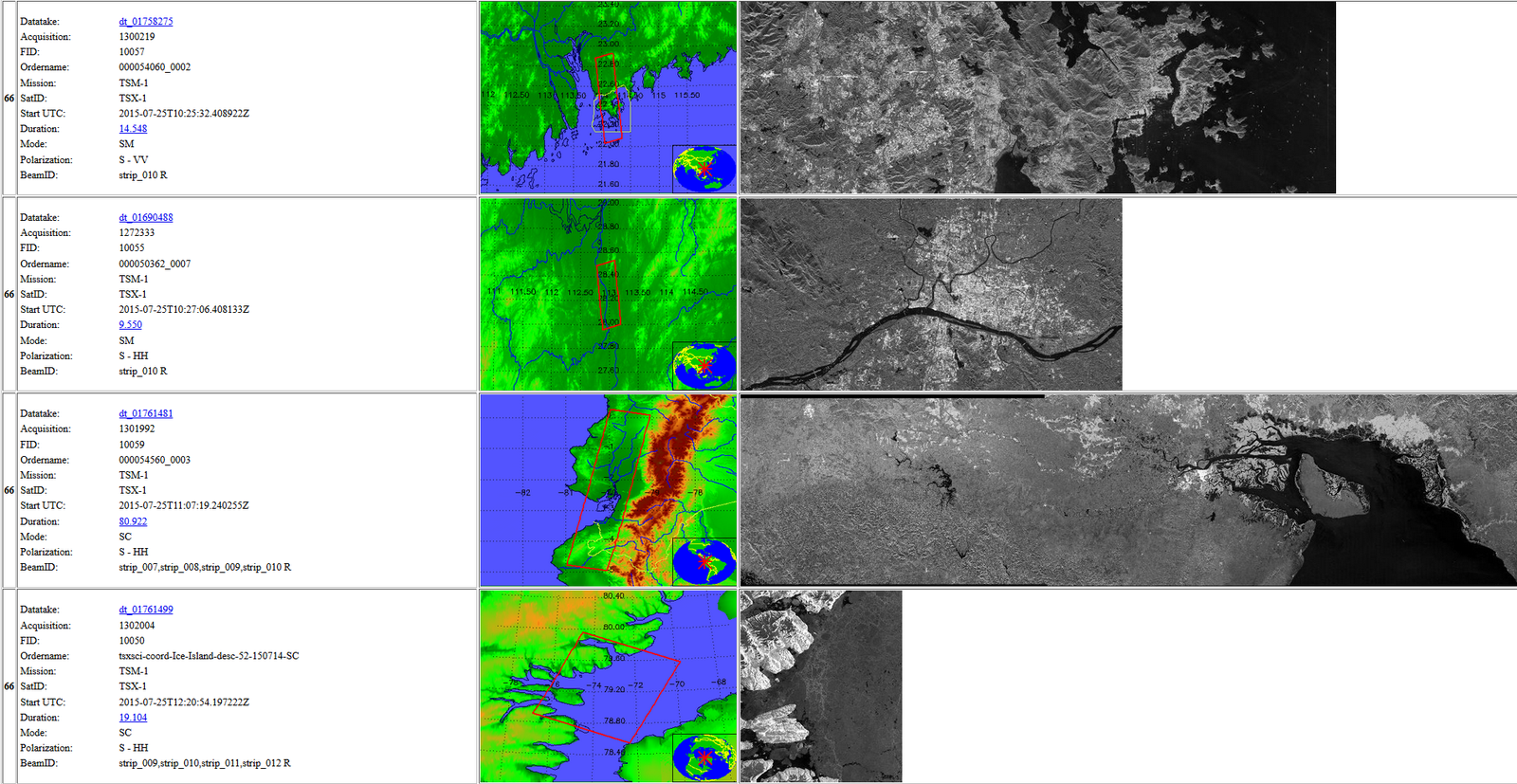

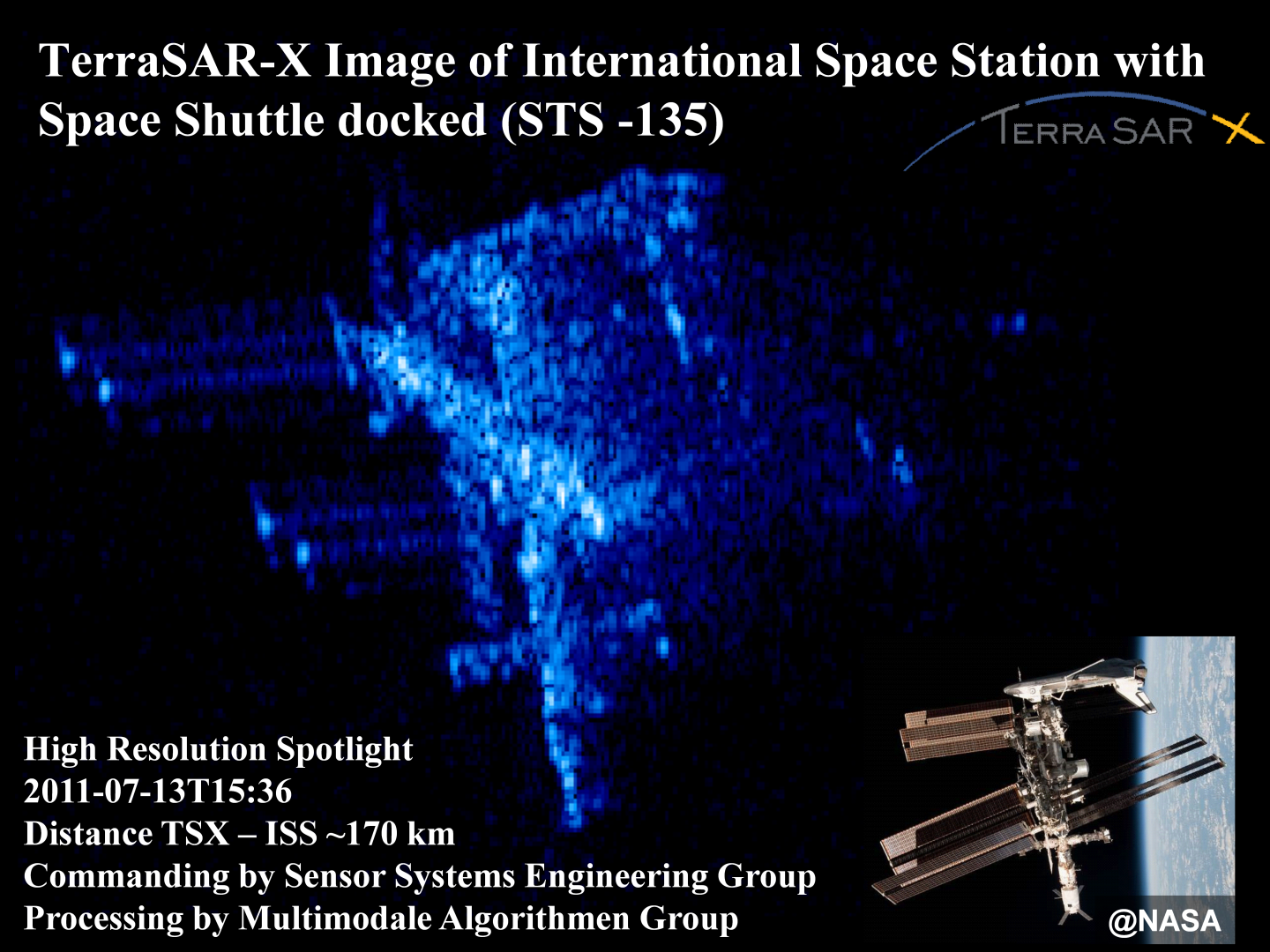

Having gained the necessary instrument understanding, we develop data take commanding concepts, which we implement and also execute in the later project phases. By exploiting the full instrument flexibility, we prepare and execute additional SAR experiments that serve as a test for and verification of next generation radar technologies.

During Phase E we constantly monitor the operation of the SAR instrument and its health status and perform preventive and corrective maintenance when needed.

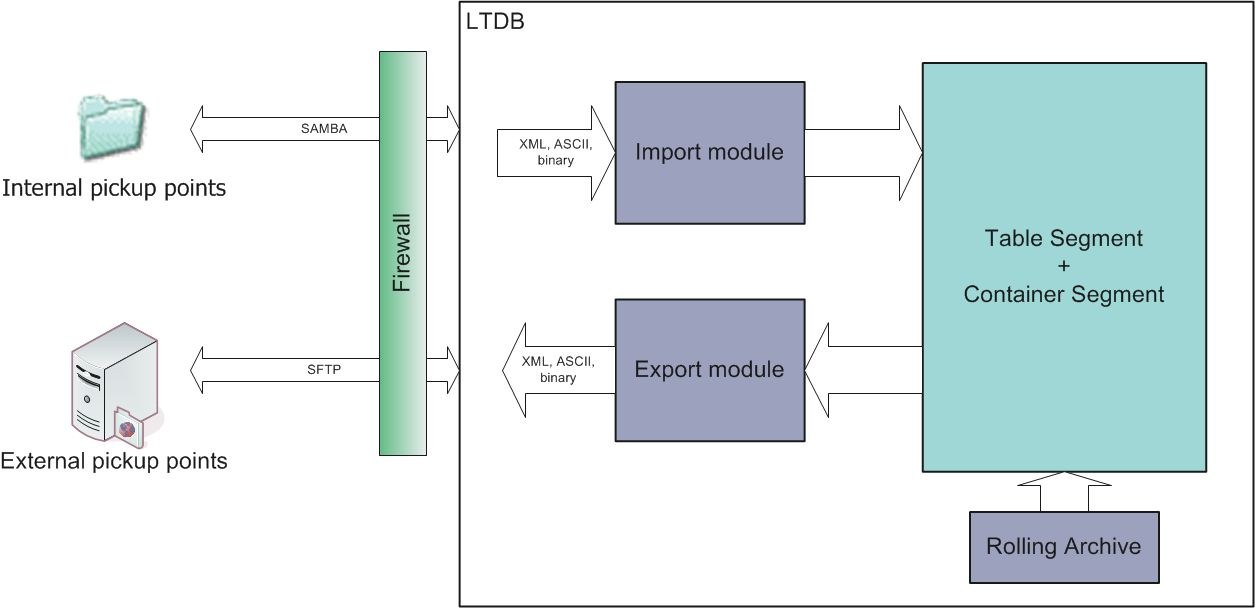

Instrument Data Management

To support various analyses and the long term monitoring of the satellite payload (often exceeding 10 years) we develop solutions for data management. These solutions include archive systems, such as data bases and file servers and various (mostly web-based) functions for data access, ordering and dissemination. These systems are then integrated in data flows of the ground segment.

Project Participation

TerraSAR-X/TanDEM-X

- Project Management of “System Engineering and Calibration (SEC)”

- Systems Engineering for SEC and ground segment systems

- Shadow Engineering during the development, integration and assembly of the SAR Instrument

- Development and Operations of the “Instrument Operations and Calibration Segment (IOCS)”, one of the three main ground segment systems:

- Integration, verification and validation on IOCS and ground segment level

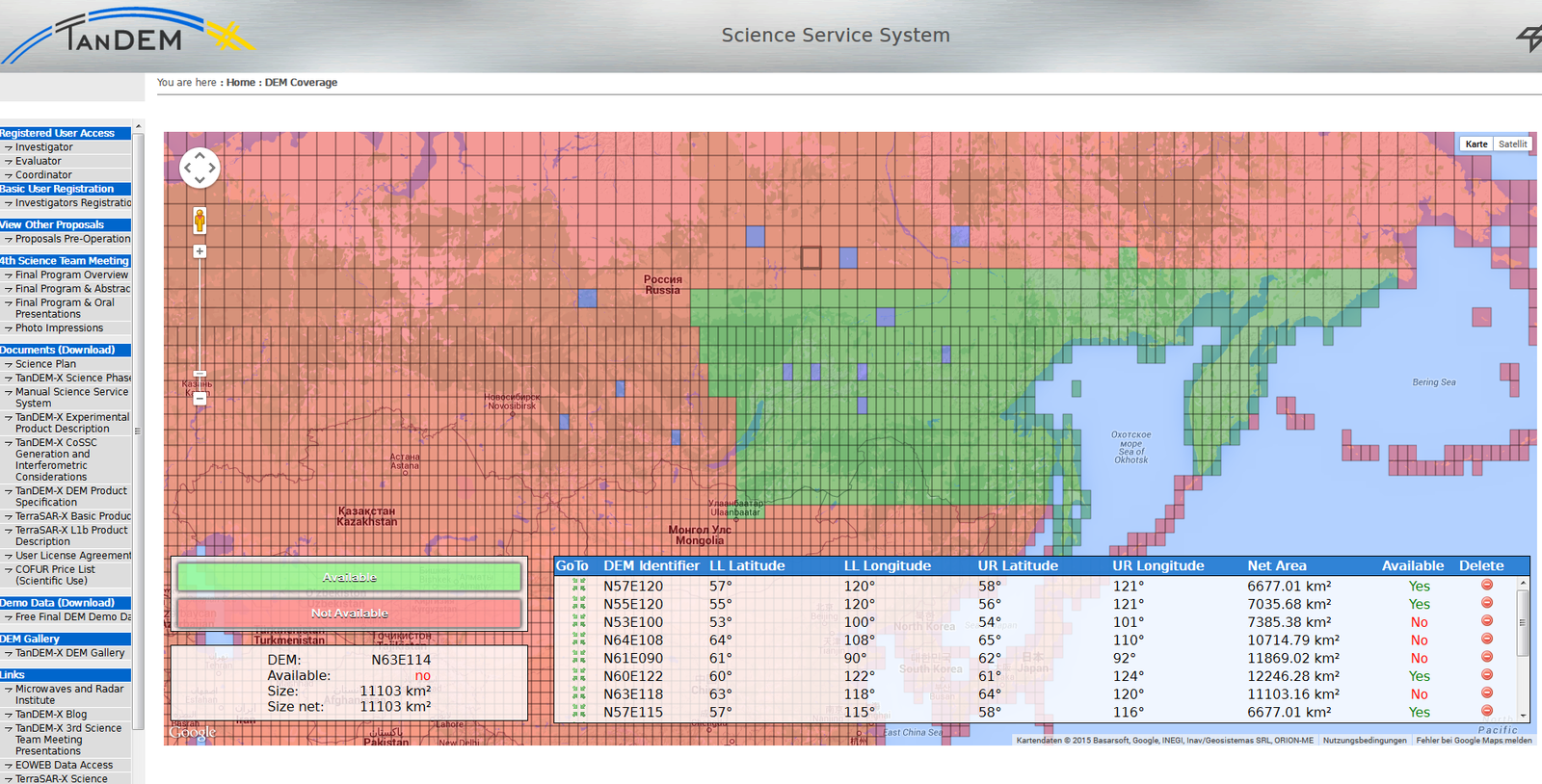

- Implementation of parts of the TanDEM-X science service software

Tandem-L

- Ground segment systems engineering management

- Requirements derivation and consolidation throughout the GS systems

- Functional architecture development coordination

- Project office support

- Lead requirements engineering and management of the Mission Requirements Document

- Concept development of “Instrument Operations and Calibration System (IOCS)”

- IOCS project management

- Systems engineering management

- IOCS functional decomposition

- SAR Payload operations and data take commanding

- SAR system verification

PAZ

- PAZ project management in DLR

- Systems engineering for the instrument operations and calibration and verification (IO&CALVER) software

- Provision of the IO&CALVER software:

- SAR system verification software

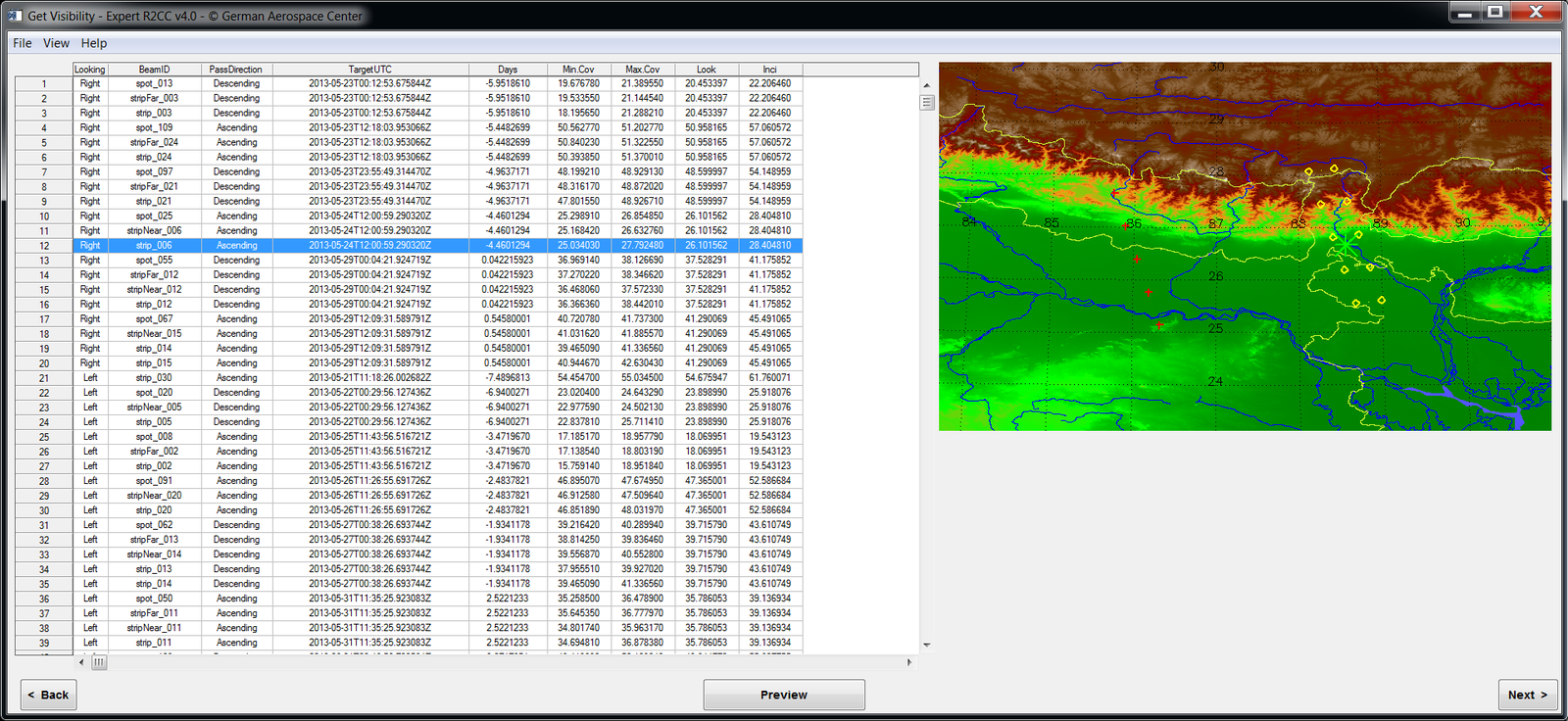

- Request to Command Converter (R2CC)

- Expert R2CC for the exploitation of the full SAR instrument flexibility

- Data warehouse for SAR relevant information